You are browsing documentation for an older version. See the latest documentation here.

Kong Ingress Controller Deployment

The Kong Ingress Controller is designed to be deployed in a variety of ways based on uses-cases. This document explains various components involved and choices one can make as per the specific use-case.

- Kubernetes Resources: Various Kubernetes resources required to run the Kong Ingress Controller.

- Deployment options: A high-level explanation of choices that one should consider and customize the deployment to best serve a specific use case.

Kubernetes Resources

The following resources are used to run the Kong Ingress Controller:

- Namespace

- Custom resources

- RBAC permissions

- Ingress Controller Deployment

- Kong Proxy service

- Database deployment and migrations

These resources are created if the reference deployment manifests are used to deploy the Kong Ingress Controller. The resources are explained below for users to gain an understanding of how they are used, so that they can be tweaked as necessary for a specific use-case.

Namespace

optional

The Kong Ingress Controller can be deployed in any namespace.

If Kong Ingress Controller is being used to proxy traffic for all namespaces

in a Kubernetes cluster, which is generally the case,

it is recommended that it is installed in a dedicated

kong namespace but it is not required to do so.

The example deployments present in this repository automatically create a kong

namespace and deploy resources into that namespace.

Custom Resources

required

The Ingress resource in Kubernetes is a fairly narrow and ambiguous API, and doesn’t offer resources to describe the specifics of proxying. To overcome this limitation, custom resources are used as an “extension” to the existing Ingress API.

A few custom resources are bundled with the Kong Ingress Controller to configure settings that are specific to Kong and provide fine-grained control over the proxying behavior.

Please refer to the custom resources concept document for details.

RBAC permissions

required

The Kong Ingress Controller communicates with the Kubernetes API-server and dynamically configures Kong to automatically load balance across pods of a service as any service is scaled in or out.

For this reason, it requires RBAC permissions to access resources stored in the Kubernetes object store.

It needs read permissions (get, list, watch) on the following Kubernetes resources:

- EndpointSlices

- Events

- Nodes

- Pods

- Secrets

- Ingress

- IngressClassParameters

- KongClusterPlugins

- KongPlugins

- KongConsumers

- KongIngress

- TCPIngresses

- UDPIngresses

By default, the controller listens above resources across all namespaces and will

need access to these resources at the cluster level

(using ClusterRole and ClusterRoleBinding).

In addition to these, it needs:

- Create, list, get, watch, delete and update

ConfigMaps andLeasesto facilitate leader-election. Please read this document for more details. - Update permission on the Ingress resource to update the status of the Ingress resource.

If the Kong Ingress Controller is listening for events on a single namespace,

these permissions can be updated to restrict these permissions to a specific

namespace using Role and RoleBinding resources.

In addition to these, it is necessary to create a ServiceAccount, which

has the above permissions. The Ingress Controller Pod then has this

ServiceAccount association. This gives the Ingress Controller process

necessary authentication and authorization tokens to communicate with the

Kubernetes API-server.

rbac.yaml contains the permissions needed for the Kong Ingress Controller to operate correctly.

Ingress Controller deployment

required

Kong Ingress deployment consists of the Ingress Controller deployed alongside Kong. The deployment will be different depending on if a database is being used or not.

The deployment(s) is the core which actually runs the Kong Ingress Controller.

See the database section below for details.

Kong Proxy service

required

Once the Kong Ingress Controller is deployed, one service is needed to

expose Kong outside the Kubernetes cluster so that it can receive all traffic

that is destined for the cluster and route it appropriately.

kong-proxy is a Kubernetes service which points to the Kong pods which are

capable of proxying request traffic. This service will be usually of type

LoadBalancer, however it is not required to be such.

The IP address of this service should be used to configure DNS records

of all the domains that Kong should be proxying, to route the traffic to Kong.

Database deployment and migration

optional

The Kong Ingress Controller can run with or without a database. If a database is being deployed, then following resources are required:

- A

StatefulSetwhich runs a PostgreSQL pod backed with aPersistentVolumeto store Kong’s configuration. - An internal Service which resolves to the PostgreSQL pod. This ensures that Kong can find the PostgreSQL instance using DNS inside the Kubernetes cluster.

- A batch

Jobto run schema migrations. This is required to be executed once to install bootstrap Kong’s database schema. Please note that on an any upgrade for Kong version, anotherJobwill need to be created if the newer version contains any migrations.

To figure out if you should be using a database or not, please refer to the database section below.

Deployment options

Following are the different options to consider while deploying the Kong Ingress Controller for your specific use case:

- Kubernetes Service Type: Chose between Load Balancer vs Node-Port

- Database: Backing Kong with a Database or running without a database

- Multiple Ingress Controllers: Running multiple Kong Ingress Controllers inside the same Kubernetes cluster

- Runtime: Using Kong Gateway (OSS) or Kong Gateway Enterprise (for Enterprise customers)

- Gateway Discovery: Dynamically discovering Kong’s Admin API endpoints

- Konnect integration: Integration with the Kong’s Konnect platform

Kubernetes Service Types

Once deployed, any Ingress Controller needs to be exposed outside the Kubernetes cluster to start accepting external traffic. In Kubernetes, Service abstraction is used to expose any application to the rest of the cluster or outside the cluster.

If your Kubernetes cluster is running in a cloud environment, where

Load Balancers can be provisioned with relative ease, it is recommended

that you use a Service of type LoadBalancer to expose Kong to the outside

world.

For the Kong Ingress Controller to function correctly, it is also required

that a L4 (or TCP) Load Balancer is used and not an L7 (HTTP(s)) one.

If your Kubernetes cluster doesn’t support a service of type LoadBalancer,

then it is possible to use a service of type NodePort.

Database

Until Kong 1.0, a database was required to run Kong. Kong 1.1 introduced a new mode, DB-less, in which Kong can be configured using a config file, and removes the need to use a database.

It is possible to deploy and run the Kong Ingress Controller with or without a database. The choice depends on the specific use-case and results in no loss of functionality.

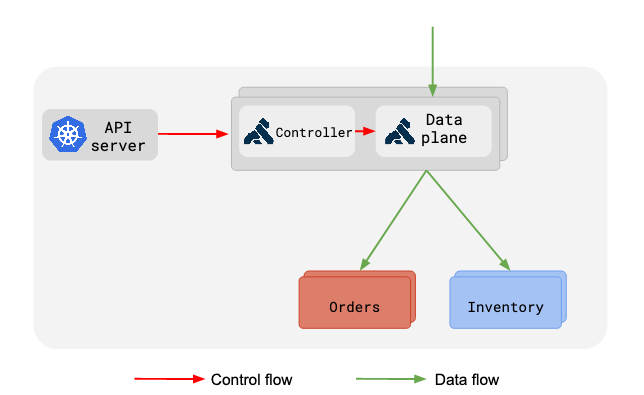

Without a database

In DB-less deployments, Kong’s Kubernetes ingress controller runs alongside and dynamically configures Kong as per the changes it receives from the Kubernetes API server.

Following figure shows what this deployment looks like:

In this deployment, only one Deployment is required, which is comprised of a Pod with two containers, a Kong container which proxies the requests and a controller container which configures Kong.

kong-proxy service would point to the ports of the Kong container in the

above deployment.

Since each pod contains a controller and a Kong container, scaling out simply requires horizontally scaling this deployment to handle more traffic or to add redundancy in the infrastructure.

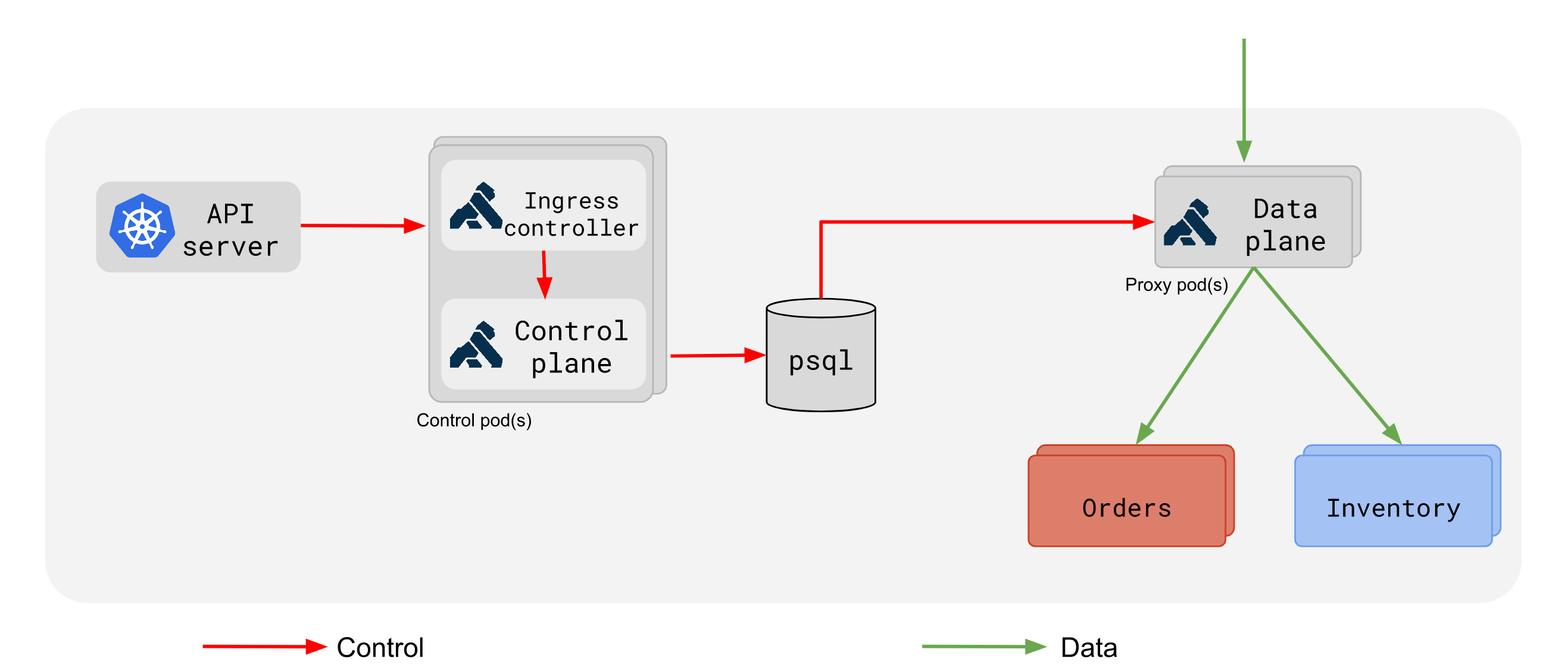

With a Database

In a deployment where Kong is backed by a DB, the deployment architecture is a little different.

Please refer to the below figure:

In this type of deployment, there are two types of deployments created, separating the control and data flow:

- Control-plane: This deployment consists of a pod(s) running the controller alongside a Kong container, which can only configure the database. This deployment does not proxy any traffic but only configures Kong. If multiple replicas of this pod are running, a leader election process will ensure that only one of the pods is configuring Kong’s database at a time.

- Data-plane: This deployment consists of pods running a single Kong container which can proxy traffic based on the configuration it loads from the database. This deployment should be scaled to respond to change in traffic profiles and add redundancy to safeguard from node failures.

- Database: The database is used to store Kong’s configuration and propagate changes to all the Kong pods in the cluster. All Kong containers, in the cluster should be able to connect to this database.

A database driven deployment should be used if your use-case requires dynamic creation of Consumers and/or credentials in Kong at a scale large enough that the consumers will not fit entirely in memory.

Multiple Ingress Controllers

It is possible to run multiple instances of the Kong Ingress Controller or run a Kong Kong Ingress Controller alongside other Ingress Controllers inside the same Kubernetes cluster.

There are a few different ways of accomplishing this:

- Using

kubernetes.io/ingress.classannotation: It is common to deploy Ingress Controllers on a cluster level, meaning an Ingress Controller will satisfy Ingress rules created in all the namespaces inside a Kubernetes cluster. Use the annotation on Ingress and Custom resources to segment the Ingress resources between multiple Ingress Controllers. Warning! When you use another Ingress Controller, which is default for cluster (without set anykubernetes.io/ingress.class), be aware of using defaultkongingress class. There is special behavior of the defaultkongingress class, where any ingress resource that is not annotated is picked up. Therefore with different ingress class thenkong, you have to use that ingress class with every Kong CRD object (plugin, consumer) which you use. - Namespace based isolation: Kong Ingress Controller supports a deployment option where it will satisfy Ingress resources in a specific namespace. With this model, one can deploy a controller in multiple namespaces and they will run in an isolated manner.

- If you are using Kong Gateway Enterprise, you can run multiple Ingress Controllers pointing to the same database and configuring different workspaces inside Kong Gateway Enterprise. With such a deployment, one can use either of the above two approaches to segment Ingress resources into different Workspaces in Kong Gateway Enterprise.

Runtime

The Kong Ingress Controller is compatible with a variety of runtimes:

Kong Gateway (OSS)

This is the Open-Source Gateway runtime. The Ingress Controller is primarily developed against releases of the open-source gateway.

Kong Gateway Enterprise

The Kong Ingress Controller is also compatible with the full-blown version of Kong Gateway Enterprise. This runtime ships with Kong Manager, Kong Portal, and a number of other enterprise-only features. This doc provides a high-level overview of the architecture.

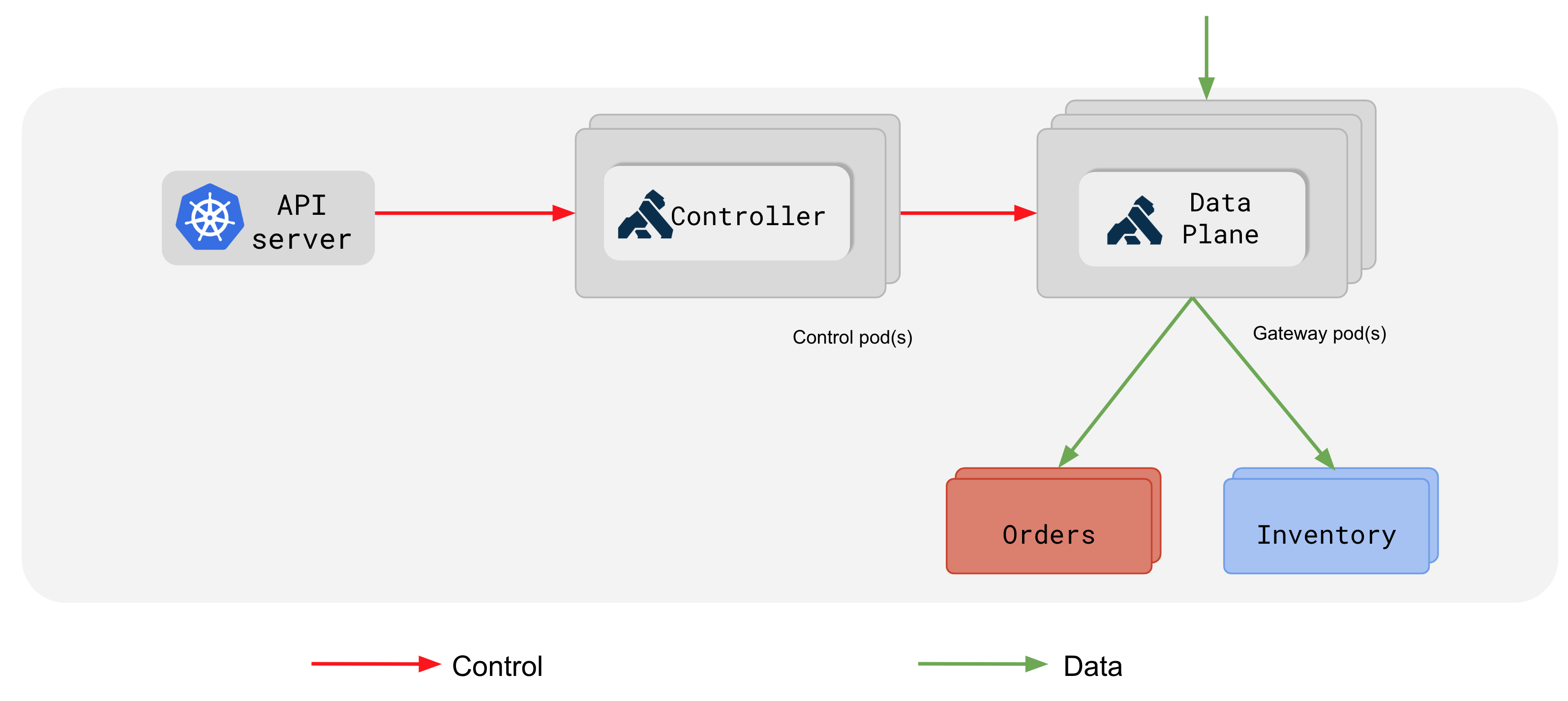

Gateway Discovery

Kong Ingress Controller can also be configured to discover deployed Gateways. This works by using a separate Kong Gateway deployment which exposes a Kubernetes Service which is backed by Kong Admin API endpoints. This Service endpoints can be then discovered by Kong Ingress Controller through Kubernetes EndpointSlice watch.

Gateway Discovery is only supported with DB-less deployments of Kong.

The overview of this type of deployment can be found on the figure below:

In this type of architecture, there are two types of Kubernetes deployments created, separating the control and data flow:

- Control-plane: This deployment consists of a pod(s) running the controller. This deployment does not proxy any traffic but only configures Kong. Leader election is enforced in this deployment when running with Gateway Discovery enabled to ensure only 1 controller instance is sending configuration to data planes at a time.

- Data-plane: This deployment consists of pods running Kong which can proxy traffic based on the configuration it receives via the Admin API. This deployment should be scaled to respond to change in traffic profiles and add redundancy to safeguard from node failures.

Both of those deployments can be scaled independently.

For more hands on experience with Gateway Discovery please see our guide.

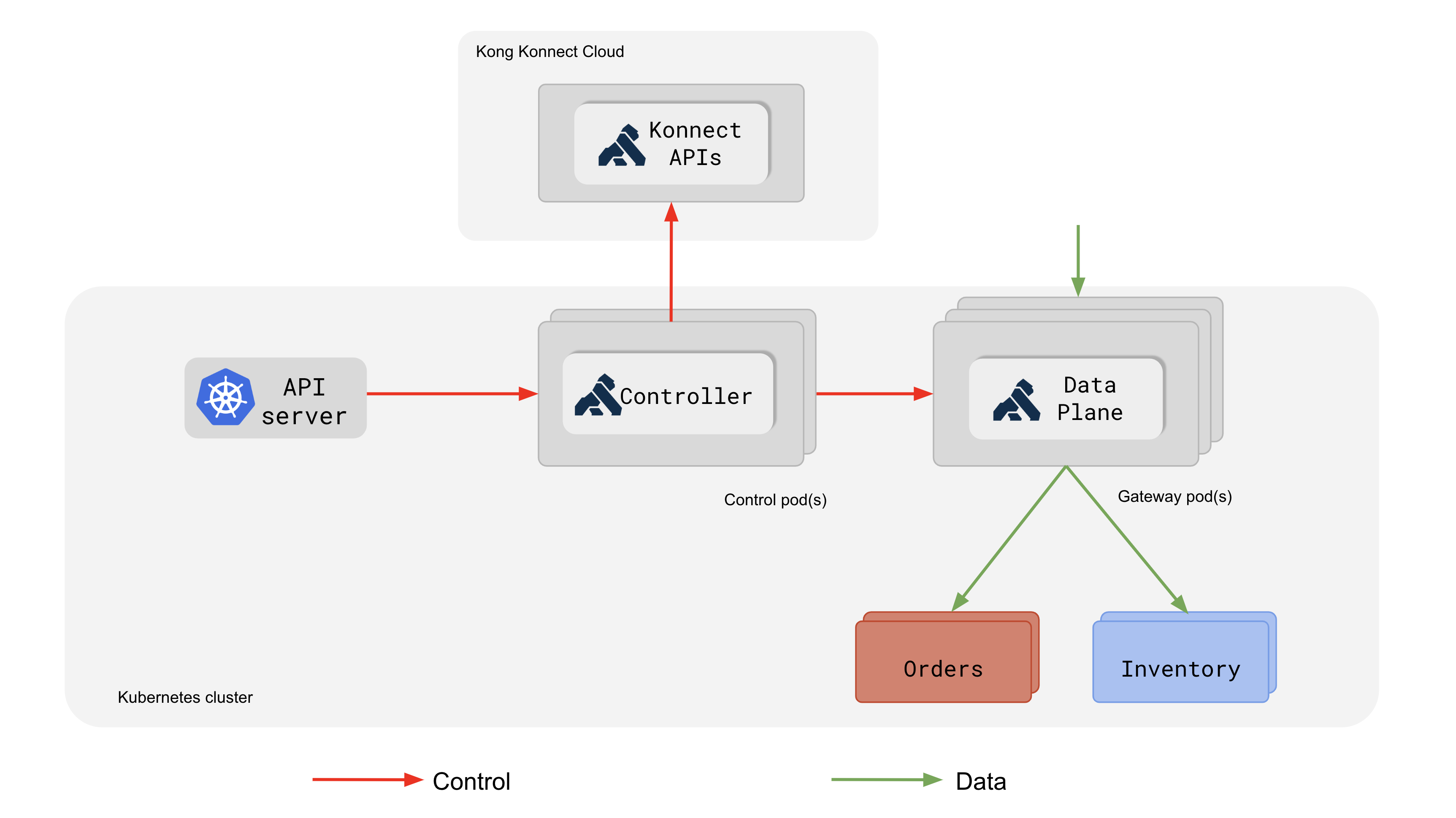

Konnect Integration

Kong Ingress Controller can be integrated with Kong’s Konnect platform. It’s an optional feature that allows configuring a Konnect control plane with the same configuration as the one used by Kong Ingress Controller for configuring local Kong Gateways. It enables using Konnect UI to inspect the configuration of your Kong instances in a read-only mode, track Analytics, and more.

For installation steps, please see the Kong Ingress Controller for Kubernetes Association page.

From the architecture perspective, the integration is similar to the Gateway Discovery and builds on top of it. The difference is that Kong Ingress Controller additionally configures a control plane in Konnect using the public Admin API of the Konnect’s Gateway Manager. The connection between Kong Ingress Controller and Konnect is secured using mutual TLS.

As Kong Ingress Controller calls Konnect’s APIs, outbound traffic from Kong Ingress Controller’s pods must be allowed to reach Konnect’s *.konghq.com hosts.

Kong Ingress Controller’s control plane in Konnect is read-only. Although the configuration displayed in Konnect will match the configuration used by proxy instances, it cannot be modified from the GUI. You must still modify the associated Kubernetes resources to change proxy configuration. In the event of a connection failure between Kong Ingress Controller and Konnect, Kong Ingress Controller will continue to update data plane proxy configuration normally, but will not update the control plane’s configuration until it can connect to Konnect again.